The previous article showed the 18-35x gap between premium and alternative frontier models. Here’s what that looks like with real usage data.

ScopeThis reflects a vacation month — lighter than typical usage.

The Numbers

Last month I processed 606.3 million tokens across GLM-5, GLM-5.1, Qwen 3.5, and Gemma 4. The breakdown:

| Model | Tokens | Messages |

|---|---|---|

| GLM-5:cloud | 500.1M | 7,991 |

| GLM-5.1:cloud | 93.5M | 1,366 |

| Qwen3.5:cloud | 7.3M | 82 |

| Gemma4:31b-cloud | 4.9M | 60 |

| GLM-4.5-air | 0.5M | 11 |

Total: 606.3M tokens, 9,510 messages.

One developer. One month. Scale that to a team of 10 and you’d hit 6 billion tokens — a $60,000-$76,000 monthly invoice on premium APIs. The gap compounds with every hire.

Cost on Ollama Cloud: $20/month flat.

How does that work economically? Ollama hosts and runs open models on their own NVIDIA datacenter infrastructure — the same GLM-5, Qwen, DeepSeek models this series covers. They use native weights, not quantized versions. The $20/month subscription covers GPU compute time; usage is measured by actual hardware utilization, not token count. They’re not routing to third-party APIs or reselling another provider’s inference.

Premium API Equivalent

Same usage, premium pricing (April 2026 rates, assuming 70/30 input/output split):

| Model | Input Cost | Output Cost | Total |

|---|---|---|---|

| Claude Opus 4.7 | $2,122 | $4,547 | $6,669 |

| Claude Sonnet 4.6 | $1,273 | $2,728 | $4,002 |

| GPT-5.5 | $2,122 | $5,457 | $7,579 |

| Gemini 3 Pro | $849 | $2,183 | $3,032 |

Savings: 152-379x.

The gap isn’t abstract. It’s on my invoice.

The Local Option

The previous article covered self-hosted at enterprise scale. But the same math applies at home-lab scale.

I have an EVO X2 with a Ryzen AI Max+ 395 and 96GB RAM. It can run smaller models locally — Gemma 4, GLM-4.7 Flash, Qwen 3.5. The larger GLM-5 class models need more VRAM than the NPU provides, so those stay on cloud.

What if I ran everything locally?

Running 606M tokens through local inference on smaller models:

- Power: ~80W average (NPU + RAM)

- Electricity (Ireland): €0.28/kWh

- Estimated cost:

€23 ($25) in electricity

That’s roughly equivalent to the $20 cloud subscription. The difference isn’t cost — it’s data control.

Why I Don’t Run Local

The math works out. Why stay on cloud?

Model availability. GLM-5 and GLM-5.1 are my primary models. They don’t run efficiently on consumer NPUs yet. The 27B+ parameter class needs more hardware than I have.

Power cost. My EVO X2 doesn’t run 24/7. It wakes when needed for local inference, ComfyUI, or privacy-sensitive work. Most queries go to Ollama Cloud. I explained the full architecture in my home lab infrastructure post — the Pi orchestrates, cloud thinks, EVO handles what must stay local.

Convenience. $20/month covers everything. No model management, no quantization decisions, no memory tuning. I type, it responds.

But for sensitive data? If I had workloads that couldn’t leave my infrastructure, I’d shift Qwen and Gemma to local inference. Same work. Full sovereignty. ~$25 in electricity — but only when the EVO is awake. The Pi handles orchestration, not inference.

That’s the self-hosted pattern from the previous article — at personal scale.

The Hybrid Reality

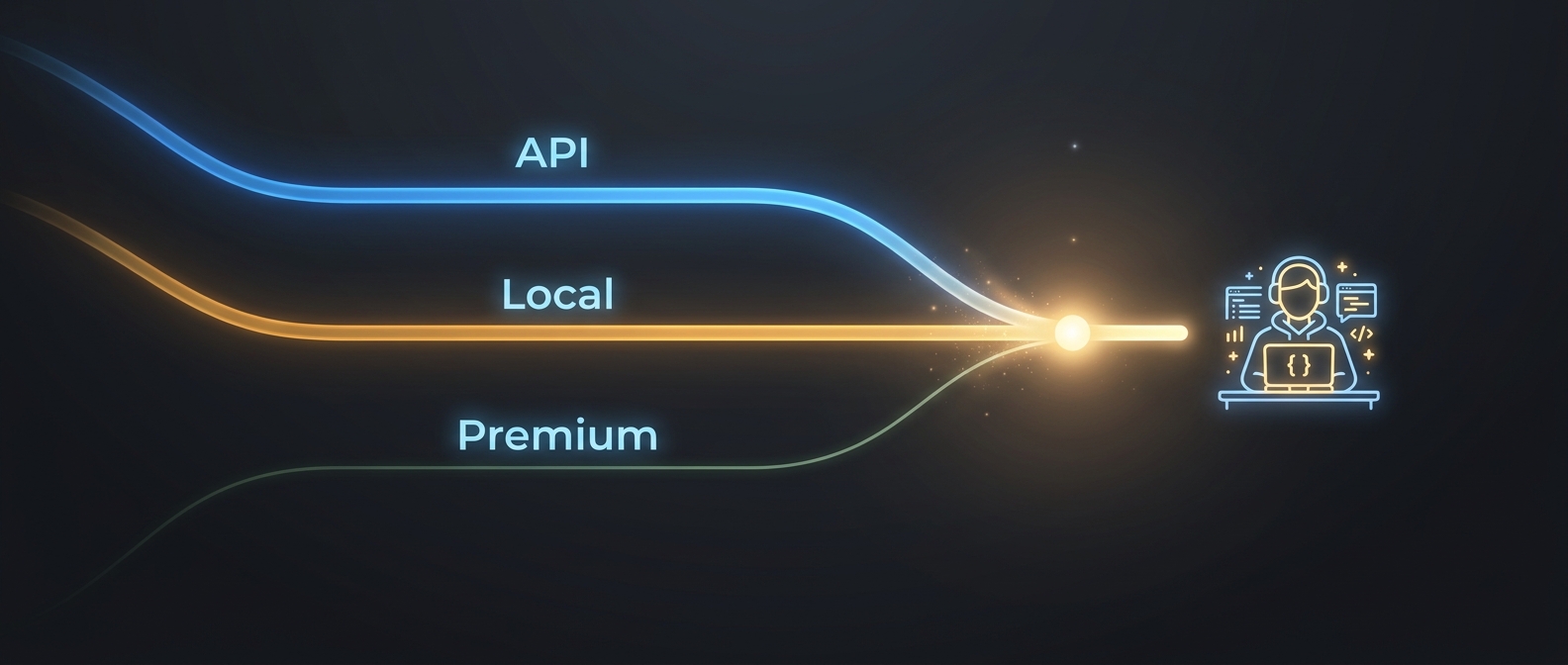

I use all three paths:

| Path | Workload | Why |

|---|---|---|

| Alternative API (Ollama Cloud) | GLM-5, GLM-5.1 | Large models, interactive use |

| Local-capable | Qwen 3.5, Gemma 4 | Could run local, don’t need to |

| Premium API | None | No current workload justifies 150-380x markup |

This matches the enterprise pattern: alternative APIs for heavy lifting, local for data sovereignty, premium only when you need what only premium provides.

The Coding Layer (Not Shown Above)

The token counts in this article cover orchestration and research. They exclude my coding agent usage, which runs on separate infrastructure:

| Model | Role | Where |

|---|---|---|

| Qwen Coder | Code generation, refactoring | Local (EVO X2) + Cloud |

| DeepSeek V4 Flash | Fast execution, cheap iterations | Cloud (Ollama) |

| GLM-5 | Complex planning, architecture decisions | Cloud (Ollama) |

| DeepSeek V4 Pro | Alternative orchestrator | Cloud (piloting) |

The pattern: GLM-5 plans, Qwen Coder writes code, DeepSeek Flash handles quick iterations. Each layer uses the cheapest model that delivers acceptable quality for that task.

What Premium Would Buy Me

The previous article listed what you get for 18-35x:

- Familiarity (everyone knows Claude and GPT)

- Enterprise support and SLAs

- Compliance certifications (SOC 2, HIPAA)

- Safety alignment and audit trails

For my use case — coding assistance, research synthesis, task automation — the alternative frontier models match premium quality on structured work. I don’t process PII or regulated data. I don’t need enterprise support for a personal assistant.

This is the quality parity argument from the previous article made concrete. GLM-5 handles classification, extraction, synthesis, and routing as well as Claude Opus for my workloads. The 150-380x markup would buy me familiarity and enterprise SLAs I don’t need.

The gap exists. The question is whether what fills it is worth paying for.

The Pattern, Personal Scale

Enterprise break-even for self-hosting: 50M+ tokens/month.

Personal reality: I hit 606M tokens in a month. At $20 cloud vs $25 local electricity, they’re equivalent. The decision isn’t cost — it’s convenience vs control.

For most people reading this: the alternative API path is the default. Premium for frontier work. Alternative for everything else. Local when data matters.

Three paths. Same framework. Different scale.

See also: The Frontier Model Gap — the enterprise breakdown of premium vs alternative vs self-hosted